Archive for optimization

mostly MC [April]

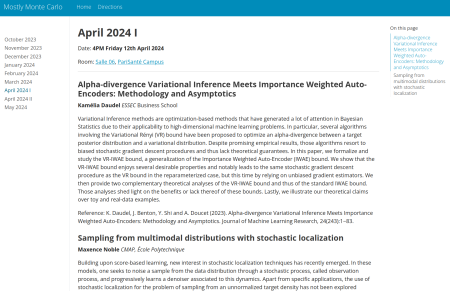

Posted in Books, Kids, Statistics, University life with tags #ERCSyG, Bayesian computational methods, Bayesian inference, denoising, generative models, Institut PR[AI]RIE, JMLR, machine learning, Markov chain Monte Carlo, MCMC, Monte Carlo methods, Monte Carlo Statistical Methods, mostly Monte Carlo seminar, multimodal target, Ocean, optimization, Paris, PariSanté campus, PSC, sampling, score-based generative models, seminar, simulation, stochastic diffusions, stochastic localization, variational autoencoders on April 5, 2024 by xi'anmostly M[ar]C[h]

Posted in Books, Kids, Statistics, University life with tags diffusions, generative modelling, generative models, Monte Carlo methods, Monte Carlo Statistical Methods, mostly Monte Carlo seminar, normalizing flow, optimal transport, optimization, Paris, PariSanté campus, push-forward distribution, seminar, stochastic diffusions, The Prairie Chair on February 27, 2024 by xi'anpostdoctoral position in computational statistical physics and machine learning

Posted in Statistics with tags computational statistical physics, Ecole des Ponts, INRIA, machine learning, optimization, Paris, postdoctoral position, statistical methodology, thermodynamic integration on February 12, 2019 by xi'anMichael Jordan’s seminar in Paris next week

Posted in Statistics, University life with tags INRIA, optimization, Paris, seminar, stochastic gradient, variational Bayes methods on June 3, 2016 by xi'anNext week, on June 7, at 4pm, Michael will give a seminar at INRIA, rue du Charolais, Paris 12 (map). Here is the abstract:

A Variational Perspective on Accelerated Methods in Optimization

Accelerated gradient methods play a central role in optimization,achieving optimal rates in many settings. While many generalizations and extensions of Nesterov’s original acceleration method have been proposed,it is not yet clear what is the natural scope of the acceleration concept.In this paper, we study accelerated methods from a continuous-time perspective. We show that there is a Lagrangian functional that we call the Bregman Lagrangian which generates a large class of accelerated methods in continuous time, including (but not limited to) accelerated gradient descent, its non-Euclidean extension, and accelerated higher-order gradient methods. We show that the continuous-time limit of all of these methods correspond to travelling the same curve in space time at different speeds, and in this sense the continuous-time setting is the natural one for understanding acceleration. Moreover, from this perspective, Nesterov’s technique and many of its generalizations can be viewed as a systematic way to go from the continuous-time curves generated by the Bregman Lagrangian to a family of discrete-time accelerated algorithms. [Joint work with Andre Wibisono and Ashia Wilson.]

(Interested readers need to register to attend the lecture.)