Archive for Bayesian inference

mostly MC [April]

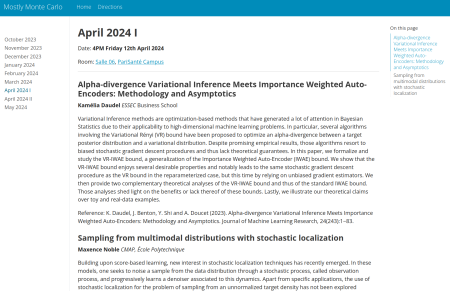

Posted in Books, Kids, Statistics, University life with tags #ERCSyG, Bayesian computational methods, Bayesian inference, denoising, generative models, Institut PR[AI]RIE, JMLR, machine learning, Markov chain Monte Carlo, MCMC, Monte Carlo methods, Monte Carlo Statistical Methods, mostly Monte Carlo seminar, multimodal target, Ocean, optimization, Paris, PariSanté campus, PSC, sampling, score-based generative models, seminar, simulation, stochastic diffusions, stochastic localization, variational autoencoders on April 5, 2024 by xi'anBayesian wars of the canals

Posted in pictures, Statistics, Travel, University life with tags Bayesian inference, Belgium, conference, Flanders, Ghent, Ghent University, Italy, MaxEnt 2024, maximum entropy, Sint-Pietersnieuwstraat, UGent, Venice on February 19, 2024 by xi'anGeneralized Poisson difference autoregressive processes [on-line]

Posted in Books, Statistics, University life with tags autoregressive model, Bayesian inference, forecasting, integer sequence, integer time-series, International Journal of Forecasting, MCMC, Poisson difference, publication, stochastic processes, Università Ca' Foscari Venezia, Venice, visiting position on January 21, 2024 by xi'anBayesian model averaging with exact inference of likelihood- free scoring rule posteriors [23/01/2024, PariSanté campus]

Posted in pictures, Statistics, Travel, University life with tags ABC, All about that Bayes, Bayesian inference, Bayesian model selection, exact inference, generative model, horseshoe prior, intractable likelihood, likelihood-free inference, neural network, PariSanté campus, scoring rules, seminar, shrinkage estimation on January 16, 2024 by xi'an A special “All about that Bayes” seminar in Paris (PariSanté campus, 23/01, 16:00-17:00) next week by my Warwick collegue and friend Rito:

A special “All about that Bayes” seminar in Paris (PariSanté campus, 23/01, 16:00-17:00) next week by my Warwick collegue and friend Rito:

Bayesian Model Averaging with exact inference of likelihood- free Scoring Rule Posteriors

Ritabrata Dutta, University of Warwick

A novel application of Bayesian Model Averaging to generative models parameterized with neural networks (GNN) characterized by intractable likelihoods is presented. We leverage a likelihood-free generalized Bayesian inference approach with Scoring Rules. To tackle the challenge of model selection in neural networks, we adopt a continuous shrinkage prior, specifically the horseshoe prior. We introduce an innovative blocked sampling scheme, offering compatibility with both the Boomerang Sampler (a type of piecewise deterministic Markov process sampler) for exact but slower inference and with Stochastic Gradient Langevin Dynamics (SGLD) for faster yet biased posterior inference. This approach serves as a versatile tool bridging the gap between intractable likelihoods and robust Bayesian model selection within the generative modelling framework.

Masterclass in Bayesian Asymptotics, Université Paris Dauphine, 18-22 March 2024

Posted in Books, pictures, Statistics, Travel, University life with tags 2024, Bayesian asymptotics, Bayesian foundations, Bayesian inference, Bernstein-von Mises theorem, bois de Boulogne, course, empirical Bayes methods, foundation lectures, France, graduate course, IMS Lecture Notes, Judith Rousseau, marginal likelihood, MASH, Master program, masterclass, Paris, PariSanté campus, Université Paris Dauphine, University of Oxford on December 8, 2023 by xi'an

On the week of 18-22 March 2024, Judith Rousseau (Paris Dauphine & Oxford) will teach a Masterclass on Bayesian asymptotics. The masterclass takes place in Paris (on the PariSanté Campus) and consists of morning lectures and afternoon labs. Attendance is free with compulsory registration before 11 March (since the building is not accessible without prior registration).

The plan of the course is as follows

Part I: Parametric models

In this part, well- and mis-specified models will be considered.

– Asymptotic posterior distribution: asymptotic normality of the posterior, penalization induced by the prior and the Bernstein von – Mises theorem. Regular and nonregular models will be treated.

– marginal likelihood and consistency of Bayes factors/model selection approaches.

– Empirical Bayes methods: asymptotic posterior distribution for parametric empirical Bayes methods.

Part II: Nonparametric and semiparametric models

– Posterior consistency and posterior convergence rates: statistical loss functions using the theory initiated by L. Schwartz and developed by Ghosal and Van der Vaart, results on less standard or well behaved losses.

– semiparametric Bernstein von Mises theorems.

– nonparametric Bernstein von Mises theorems and Uncertainty quantification.

– Stepping away from pure Bayes approaches: generalized Bayes, one step posteriors and cut posteriors.