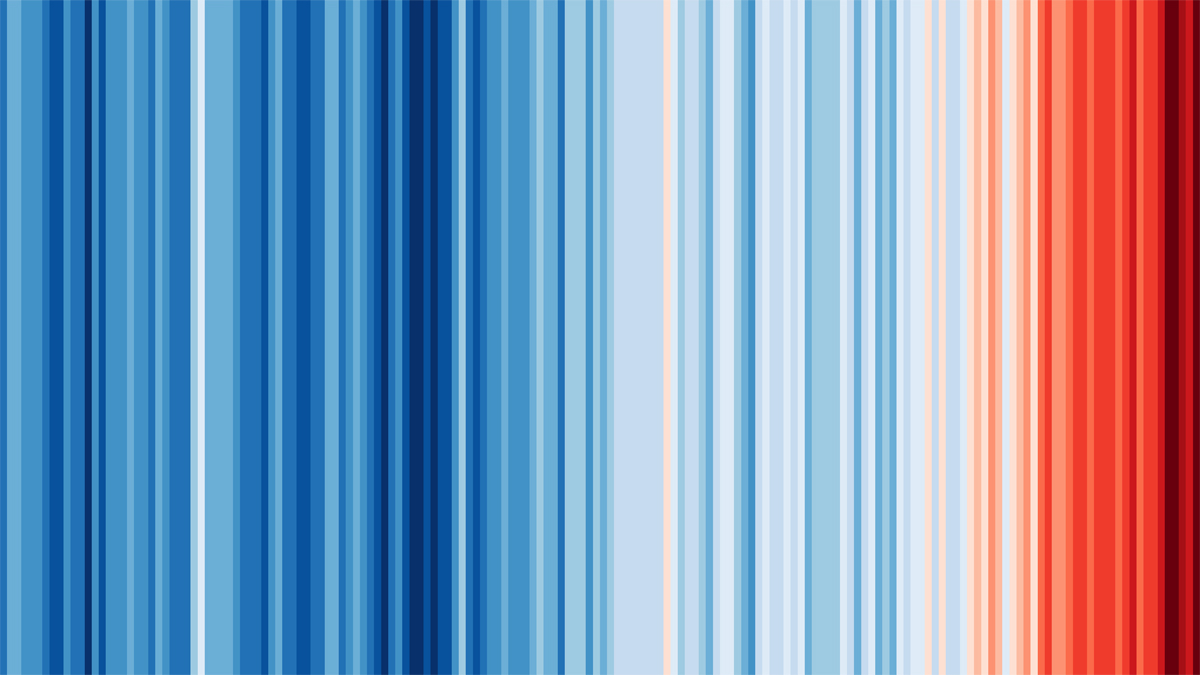

Just recently, I came across this representation of the (global) temperature rise over the period 1850-2020, made by Professor Ed Hawkins from the University of Reading. It is powerful, artsy, reminding me of the Everything mural in the Zeeman Building at the University of Warwick, and visually most appealing, as a poster about the urgency for action, but it nonetheless remain a poor statistical graph in that it is a 2d presentation of a 1d dataset, the time series of the yearly average temperatures, missing the opportunity to exploit the second axis by eg taking a stripe of the Earth from South Pole to North Pole or the daily temperature throughout the year. And it is also lacking a scale, not only by omitting the dates on the first axis, but also by choosing an arbitrary if dramatic colour range [unable to incorporate the so-far ultimate 2023?!] (And with the visual appeal comes the danger of turning it into yet another marketing gimmick, hence cancelling its message.)

Just recently, I came across this representation of the (global) temperature rise over the period 1850-2020, made by Professor Ed Hawkins from the University of Reading. It is powerful, artsy, reminding me of the Everything mural in the Zeeman Building at the University of Warwick, and visually most appealing, as a poster about the urgency for action, but it nonetheless remain a poor statistical graph in that it is a 2d presentation of a 1d dataset, the time series of the yearly average temperatures, missing the opportunity to exploit the second axis by eg taking a stripe of the Earth from South Pole to North Pole or the daily temperature throughout the year. And it is also lacking a scale, not only by omitting the dates on the first axis, but also by choosing an arbitrary if dramatic colour range [unable to incorporate the so-far ultimate 2023?!] (And with the visual appeal comes the danger of turning it into yet another marketing gimmick, hence cancelling its message.)

Archive for climate change

warming stripes [but 1d]

Posted in Kids, pictures, Statistics, Travel with tags art, climate change, data graphics, Ed Hawkins, extreme temperatures, fresque, heating, Ian Davenport, Little Ice Age, University of Reading, University of Warwick, Zeeman building on April 15, 2024 by xi'anBurning through the frozen south [and happy new year!]

Posted in Statistics with tags 2024, Antarctic Peninsula, Antarctica, climate change, Happy New Year, Michael Meredith, Royal Society, The Royal Society Photography Competition 2023 on January 1, 2024 by xi'anj’adôre la bagnole [not!]

Posted in Books, pictures, Travel with tags car culture, carbon tax, climate change, congestion tax, ecological disaster, Emmanuel Macron, French politics, gilets jaunes, Libé, political cartoon on November 1, 2023 by xi'anback to the future [no need!]

Posted in Books, Kids, Travel with tags climate change, CO2, desert, Earth, extinction, extreme temperatures, global warming, Nature, no future, Pangaea, solar radiation, supercontinent cycle, volcanoes on October 23, 2023 by xi'an A news entry in Nature of 25 Sept 23 is reporting on the grim period of Earth, 250 millions year away! By that time, continents will have drifted back, reunited into a new Pangaea supercontinent, Pangaea Ultima, which is likely to form around the equator, producing a deadly place where most life will go extinct. As happened 200 years ago. While grateful for the item of information that continental drif is thus cyclic, I find rather ironical that one worries about the extinction of life so far in the future, when it is likely to happen within a few decades!

A news entry in Nature of 25 Sept 23 is reporting on the grim period of Earth, 250 millions year away! By that time, continents will have drifted back, reunited into a new Pangaea supercontinent, Pangaea Ultima, which is likely to form around the equator, producing a deadly place where most life will go extinct. As happened 200 years ago. While grateful for the item of information that continental drif is thus cyclic, I find rather ironical that one worries about the extinction of life so far in the future, when it is likely to happen within a few decades!

fusing simulation with data science [18-19 July 2023]

Posted in pictures, Running, Statistics, Travel, University life with tags climate change, CRiSM, data assimilation, data science, fusion, Met Office, PDEs, simulation, simulator model, solver, tea, University of Warwick, weather modelling, workshop on June 5, 2023 by xi'an In collaboration with the Met Office, my friend and Warwick colleague Rito Dutta is co-organising a two-day workshop in Warwick in July on the use of statistics and machine learning tools in weather prediction. Attendance is free, but registration needed for tea breaks.

In collaboration with the Met Office, my friend and Warwick colleague Rito Dutta is co-organising a two-day workshop in Warwick in July on the use of statistics and machine learning tools in weather prediction. Attendance is free, but registration needed for tea breaks.

:quality(70):focal(1064x865:1074x875)/cloudfront-eu-central-1.images.arcpublishing.com/liberation/7VYHMCLMKVBZFCUOD6BFZL3MKU.jpg)