Sirio Legramanti, Daniele Durante, and Pierre Alquier just arXived a massive paper on the concentration of discrepancy–based ABC posteriors via Rademacher complexity, which includes MMD and Wasserstein distance-based ABC methods. The paper provides sufficient conditions under which a discrepancy within the integral probability semimetrics class guarantees uniform convergence and concentration of the induced ABC posterior, without necessarily requiring suitable regularity conditions for the underlying data generating process and the assumed statistical model, meaning that they also cover misspecified cases. In particular, the authors derive upper and lower bounds on the limiting acceptance probabilities for the ABC posterior to remain well–defined for a sample size large enough. They thus deliver an improved understanding of the factors that govern the uniform convergence and concentration properties of discrepancy–based ABC posteriors under a fairly unified perspective, which I deem a significant advance on the several papers my coauthors Ernst Bernton, David Frazier, Mathieu Gerber, Pierre Jacob, Gael Martin, Judith Rousseau, Robin Ryder, and yours truly produced in that domain over the past years (although our Series B misspecification paper does not appear in the reference list!)

Sirio Legramanti, Daniele Durante, and Pierre Alquier just arXived a massive paper on the concentration of discrepancy–based ABC posteriors via Rademacher complexity, which includes MMD and Wasserstein distance-based ABC methods. The paper provides sufficient conditions under which a discrepancy within the integral probability semimetrics class guarantees uniform convergence and concentration of the induced ABC posterior, without necessarily requiring suitable regularity conditions for the underlying data generating process and the assumed statistical model, meaning that they also cover misspecified cases. In particular, the authors derive upper and lower bounds on the limiting acceptance probabilities for the ABC posterior to remain well–defined for a sample size large enough. They thus deliver an improved understanding of the factors that govern the uniform convergence and concentration properties of discrepancy–based ABC posteriors under a fairly unified perspective, which I deem a significant advance on the several papers my coauthors Ernst Bernton, David Frazier, Mathieu Gerber, Pierre Jacob, Gael Martin, Judith Rousseau, Robin Ryder, and yours truly produced in that domain over the past years (although our Series B misspecification paper does not appear in the reference list!)

“…as highlighted by the authors, these [convergence] conditions (i) can be difficult to verify for several discrepancies, (ii) do not allow to assess whether some of these discrepancies can achieve convergence and concentration uniformly over P(Y), and (iii) often yield bounds which hinder an in–depth understanding of the factors regulating these limiting properties”

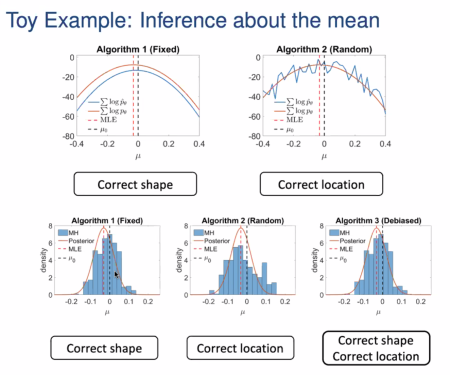

The first result is that, asymptotically in n and a fixed large-enough tolerance, the ABC posterior is always well–defined but within a Rademacher ball of the pseudo-true posterior, larger than the tolerance ε when the Rademacher complexity does not vanish in n (a feature on which my intuition is found to be lacking!, since it seems to relate solely to the class of functions adopted for the definition of said discrepancy). When the tolerance ε(n) decreases to its minimum, as in our paper, the speed of concentration is similar to ours, with a speed slower than √n. And assuming the tolerance ε(n) decreases to its minimum slower than √n but faster than the Rademacher complexity.

“…the bound we derive crucially depends on [the Rademacher complexity], which is specific to each discrepancy D and plays a fundamental role in controlling the rate of concentration of the ABC posterior.”

The paper also opens towards non-iid settings (as in our Wasserstein paper) and generalized likelihood–free Bayesian inference à la Bissiri et al. (2016). A most interesting take on the universality of ABC convergence, thus, although assuming bounded function spaces from the start.

For the final talk of this Spring season of the One World ABC webinar, we are very glad to welcome Marc Beaumont, a central figure in the development of ABC methods and inference! (And a coauthor of our ABC-PMC paper.)

For the final talk of this Spring season of the One World ABC webinar, we are very glad to welcome Marc Beaumont, a central figure in the development of ABC methods and inference! (And a coauthor of our ABC-PMC paper.)

Veronicka Rockova (from Chicago Booth) gave a talk on this theme at the

Veronicka Rockova (from Chicago Booth) gave a talk on this theme at the