It started a bit awkwardly for me as I ran late, having accidentally switched to UK time the previous evening (despite a record-breaking biking-time to the University!), then the welcome desk could not find the key to the webinar room and I ended up following the first session from my office, by myself (and my teapot)… Until we managed to reunite in the said room (with an air quality detector!).

Software sessions are rather difficult to follow and I wonder what the idea on-line version should be. We could borrow from our teaching experience new-gained from the past year, where we had to engage students without the ability to roam the computer lab and look at their screens to force engage them into coding. It is however unrealistic to run a computer lab, unless a few “guinea pigs” could be selected in advance and show their progress or lack thereof during the session. In any case, thanks to the speakers who made the presentations of

- BSL(R)

- ELFI (Python)

- ABCpy (Python)

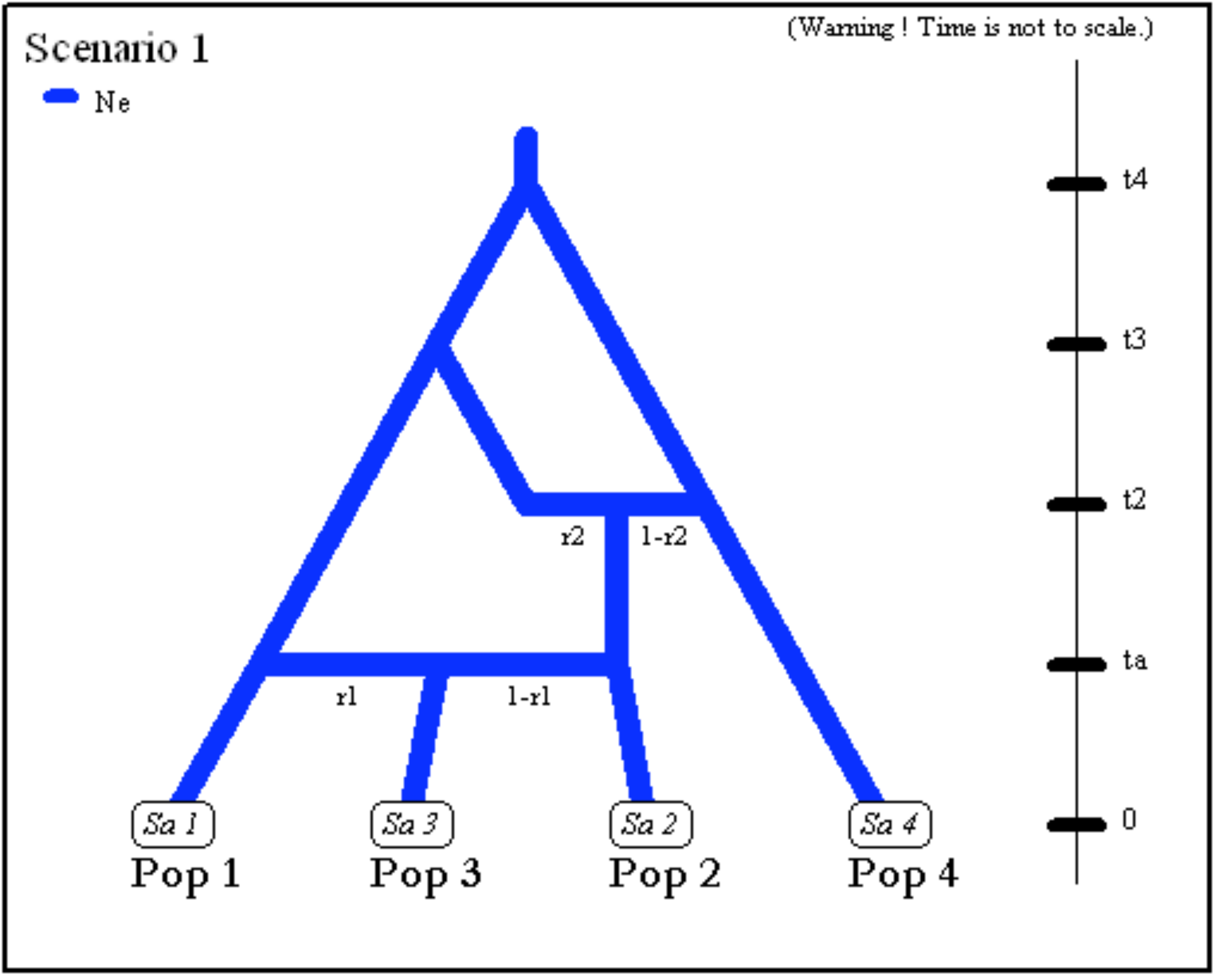

this morning/evening. (Just taking the opportunity to point out the publication of the latest version of DIYABC!).

Florence Forbes’ talk on using mixture of experts was quite alluring (and generated online discussions during the break, recovering some of the fun in real conferences), esp. from my longtime interest normalising flows in mixtures of regression (and more to come as part of our biweekly reading group!). Louis talked about gaining efficiency by not resampling the entire data in large network models. Edwin Fong brought martingales and infinite dimension distributions to the rescue, generalising Polya urns! And Justin Alsing discussed the advantages of estimating the likelihood rather than estimating the posterior, which sounds counterintuitive. With a return to mixtures as approximations, using instead normalising flows. With the worth-repeating message that ABC marginalises over nuisance parameters so easily! And a nice perspective on ABayesian decision, which does not occur that often in the ABC literature. Cecilia Viscardi made a link between likelihood estimation and large deviations à la Sanov, the rare event being associated with the larger distances, albeit dependent on a primary choice of the tolerance. Michael Gutmann presented an intringuing optimisation Monte Carlo approach from his last year AISTATS 2020 paper, the simulated parameter being defined by a fiducial inversion. Reweighted by the prior times a Jacobian term, which stroke me as a wee bit odd, ie using two distributions on θ. And Rito concluded the day by seeking approximate sufficient statistics by constructing exponential families whose components are themselves parameterised as neural networks with neural parameter ω. Leading to an unnormalised model because of the energy function, hence to the use of inference techniques on ω that do not require the constant, like Gutmann & Hyvärinen (2012). And using the (pseudo-)sufficient statistic as ABCsummary statistic. Which still requires an exchange MCMC step within ABC.